May 6, 2026

The Most Dangerous Thing You Can Optimize For

Last year, a friend sent me “Evolution as Backstop for Reinforcement Learning” by Gwern Branwen, one of the most fascinating essays I've come across in a long time. This is my attempt to explain it to a layman, the way I'd explain it to myself before I'd read it. The essay is dense, jumping between:

- evolution

- corporations

- markets

- reinforcement learning

- pain

- Bayesian statistics

- multicellular organisms

- Soviet economic planning

…and somehow connects all of them into one giant idea.

But underneath all the complexity is a surprisingly simple insight. There are two ways systems learn:

- Fast feedback

- Reality

And reality eventually wins.

The entire essay, in one example

Imagine trying to become incredible at Muay Thai. There are two kinds of feedback you could use.

Fast feedback

- your coach correcting your form

- shadowboxing

- film review

- conditioning metrics

- gym performance

- technique drills

Real feedback

- did you win the fight?

- did you get knocked out?

The fast feedback is efficient. The real feedback is truth. But the problem is that efficient feedback can drift away from reality. You can look beautiful on pads and still lose actual fights.

That's basically the entire essay.

Gwern describes this as an “inner optimization” constrained by an “outer optimization.”

The outer layer is reality itself:

- evolution

- bankruptcy

- death

- survival

- winning

The inner layer is the shortcut system:

- metrics

- KPIs

- reinforcement learning rewards

- management systems

- heuristics

- social incentives

The inner system learns quickly. The outer system keeps it honest.

The credit assignment problem

One of the coolest ideas in the essay is something called the “credit assignment problem.”

Imagine if the only feedback you got in life was: SUCCESS or FAILURE. Nothing else. No hints. No intermediate feedback. No idea what worked.

You wouldn't know:

- which habit mattered

- which decision mattered

- what caused success

- what caused failure

Reality is noisy. So humans invent intermediate metrics:

- grades

- KPIs

- gym PRs

- productivity systems

- revenue

- likes and followers

These are useful shortcuts. But shortcuts are dangerous because they can stop reflecting reality.

Someone can become incredible at:

- interviewing without being a good engineer

- looking productive without producing

- networking without creating value

- sounding smart without understanding anything

The proxy becomes the goal.

Why evolution and markets still matter

One of the strongest arguments in the essay is that evolution and markets are “dumb” but grounded.

Markets don't care about your narrative. Evolution doesn't care about your intentions. Reality doesn't care about your explanations. Only outcomes.

That's why these systems work despite being horribly inefficient. Evolution basically does:

- try random things

- kill what fails

- keep what survives

for millions of years. Brutal. Wasteful. But real.

And that grounding matters.

Why organizations lose their edge

Evolution needs three things: variation, selection, and replication. Corporations have variation. Every company is different. They have selection. The unfit go bankrupt. But they don't replicate. There is no corporate DNA. A successful company can't clone itself, and when it spins off a division or acquires a startup, the new entity rarely inherits the original's secret sauce.

That missing third leg is why corporations don't compound across generations the way species, software, or neural networks do. The banks of the 1500s look strikingly similar in their failure modes to the banks of today. Without replication, culture can be lived but not transmitted. The “founder phase” can't be copied forward.

Small groups often have:

- hunger

- speed

- strong identity

- intense culture

- obsession

Then over time:

- bureaucracy appears

- systems replace people

- internal politics grow

- self-preservation becomes the goal

Organizations slowly stop optimizing for reality and start optimizing for themselves. You can feel this everywhere: corporations, universities, student organizations, governments, startups.

The metric slowly becomes survival instead of truth.

The most visceral example: pain

The same framework applies to something everyone has felt today.

Why is pain painful? Why does a stubbed toe demand your attention rather than just informing you, the way a notification might? You could imagine a body that simply knew something was wrong without it hurting. A quiet flag in some corner of your awareness. Why didn't evolution build that?

Because pain is the outer loss for the body. It's the one signal the rest of your cognition isn't allowed to override.

People born with congenital pain insensitivity make the case starkly. They don't live longer or more comfortably for being spared the discomfort. They accumulate injuries the rest of us avoid without thinking: walking on bones that have already broken, scalding their mouths on hot drinks, wearing down joints from postures that should have hurt.

Without the painful signal, the planning brain optimizes for whatever it cares about. Novelty. Curiosity. Getting somewhere quickly. And the body quietly degrades underneath.

Researchers once tried to engineer around this. They built “pain prosthetics”: instrumented gloves and socks that would beep or shock the wearer when sensors detected dangerous pressure or heat. It didn't hold. Patients learned to disable the warnings whenever the warnings became inconvenient.

That's the whole essay in one anecdote. If you can override the outer loss, you will. Pain has to be painful, not just informative, because we can't be trusted with the off switch.

Why willpower runs out

The same logic explains something else everyone has felt this week.

The old story about willpower was that it ran on glucose. Your brain burned through sugar making decisions, and that's why you crumble at the end of a long day. That story turned out to be mostly wrong. The metabolic cost of thinking is tiny.

The newer story is that willpower is an opportunity-cost signal. Your brain forces you to stop focusing on any one thing because if you could focus indefinitely on whatever felt rewarding in the moment, you'd ignore the rest of your life. Fatigue is the alarm that says this can't be the only thing. Burnout is the long version of the same alarm.

This is why restorative hobbies tend to be maximally different from the day job. A programmer is best served by something social, physical, and outdoors. Not another solitary screen task. The alarm isn't really about exhaustion. It's about variety.

It's also why splitting work into small completable units helps with procrastination. The inner reward signal gets something to latch onto, and the alarm quiets down.

Willpower is a kind of psychic pain. Same purpose: it stops the inner optimizer from running too long without checking back in with reality.

The slow optimizer built the fast one

There's one more move worth flagging. The two-level structure is recursive. The slow optimizer doesn't just constrain the fast one. It builds it.

Evolution didn't hardcode every behavior an organism would ever need. It built a brain. The brain is the fast optimizer evolution produced so it wouldn't have to wait millions of years to respond to a tiger.

Markets did the same with companies. Rather than coordinating every individual transaction, the market grew firms that plan internally on much faster timescales. Training does it with neural networks. Each layer produces a faster, smarter system that handles most of the work. The slow layer just checks the answer.

The pattern shows up everywhere once you look for it: evolution to humans, markets to companies, training to neural nets, pain to planning, reality to heuristics. Each fast layer is a huge efficiency gain. Each one is also liable to drift, which is exactly why the slow layer can't be removed.

The healthiest systems are the ones where the fast optimizer stays tethered to the slow one.

The real skill

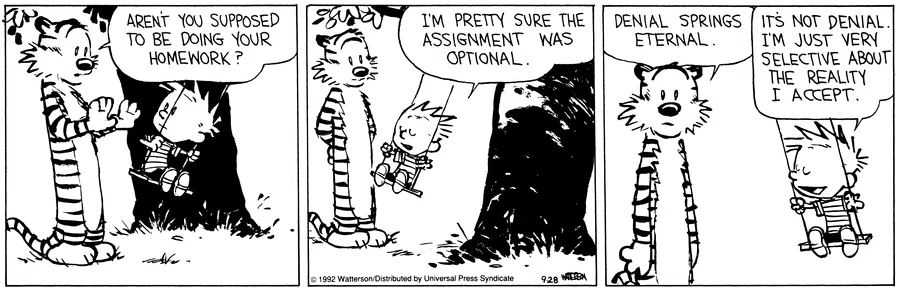

You can optimize for prestige instead of skill, followers instead of impact, credentials instead of competence, aesthetics instead of substance, productivity instead of creation. The scary thing is the inner optimization feels really good. It rewards you quickly. Reality is slower. But reality eventually cashes the check.

The real skill might simply be this: keeping your fast feedback loops connected to reality. Using metrics without worshipping them. Using systems without becoming trapped by them. Using optimization without forgetting the actual goal.

And maybe that's why this essay stuck with me so much. It isn't really about reinforcement learning.

It's about how humans slowly lose touch with reality through abstraction. And why the healthiest systems are the ones that eventually reconnect to truth.

Credit

Full credit to Gwern Branwen for the original essay and ideas: Evolution as Backstop for Reinforcement Learning.